In the ever-evolving landscape of artificial intelligence, language processing technology has consistently held a significant position. OpenAI’s ChatGPT series has already revolutionized our interactions with machines, and the latest breakthrough, GPT-4, takes this transformation to unprecedented levels. Powered by state-of-the-art machine learning algorithms, this AI model unleashes unparalleled capabilities in natural language processing. It not only comprehends and generates text but also engages in profound conversations. This article presents an in-depth exploration of GPT-4, delving into the forefront of the language revolution in artificial intelligence.

What is GPT-4?

OpenAI introduced GPT-4 as their latest natural language processing model on March 14, 2023. This cutting-edge model has surpassed its predecessor, GPT-3, by showcasing human-level performance on numerous professional tests and academic benchmarks. In simulated lawyer exams, GPT-4 outperformed over 90% of candidates. However, it is important to acknowledge that GPT-4 still encounters challenges in real-world scenarios, such as fictional facts and reasoning errors.

One notable advancement in GPT-4 is its integration of visual inputs, enabling the model to generate text outputs based on both textual and image prompts. While this feature is currently in the research phase and not publicly available yet, it signifies significant progress.

Furthermore, OpenAI has enhanced their conversation model, ChatGPT, alongside the release of GPT-4. The upgraded ChatGPT Plus now supports GPT-4, amplifying its conversational capabilities to offer more powerful interactions.

Discover more: GPT-3: Transforming Life & Work with Intelligent NLP Applications

What’s New in GPT-4?

The primary objective of developing GPT-4 is to improve the model’s “alignment” capability. This entails aligning the model more accurately with user intent, ensuring that the generated output is truthful, and minimizing the presence of offensive or high-risk content.

Additionally, you can explore the “ChatGPT Login” feature by signing up to gain access and utilize it with a guaranteed success rate of 100%.

Please note that the availability of certain features and services may vary based on your geographic location and OpenAI’s guidelines.

1. Performance Improvements

GPT-4 has demonstrated enhanced accuracy when providing factual answers compared to GPT-3.5. The model showcases a reduced occurrence of “hallucinations,” where it tends to produce factual or reasoning errors. As a result, its performance on OpenAI’s internal factuality benchmark has improved by 40% in comparison to GPT-3.5.

Furthermore, GPT-4 enhances its “controllability,” enabling users to modify its behavior based on specific instructions. For instance, users can instruct the model to write using different styles, tones, or voices. You can try providing prompts like “You are a talkative data expert” or “You are a concise data expert” and ask the model to explain a concept in data science. Additional information on designing effective prompts for GPT models can be found in available resources.

Moreover, the model has made advancements in adhering to ethical guidelines. It performs better at refusing requests that involve illegal or inappropriate actions, showcasing improvements in following predefined boundaries.

To gain further insights into the capabilities of GPT-4 and explore its potential applications, you can refer to the article titled “5 Best GPT-4 Applications in 2023.”

For more information, please visit: 5 Best GPT-4 Applications in 2023

2. Visual Input Usage in GPT-4

GPT-4 brings about a notable enhancement by introducing the capability to process both image and text inputs (currently limited to research previews and not publicly accessible). Users now have the flexibility to define various visual or language-related tasks by seamlessly incorporating both text and image inputs into their instructions.

Example of GPT-4 visual input:

GPT-4 reply:

The image depicts a package featuring a “Lightning Cable” adapter, consisting of three panels.

Panel 1: It displays a smartphone with a VGA connector, which is a relatively large, blue, 15-pin connector commonly used for computer monitors. The VGA connector is shown plugged into the charging port of the smartphone.

Panel 2: It showcases the packaging design for the “Lightning Cable” adapter, featuring an image representation of a VGA connector on the package.

Panel 3: This panel offers a close-up view of the VGA connector, highlighting a small Lightning connector at its end. The Lightning connector is typically used for charging iPhones and other Apple devices.

The humor derived from this image stems from the absurdity of connecting a large and outdated VGA connector into a small, modern charging port of a smartphone.

Note: The image description has been provided based on the given text.

How to use GPT-4?

To leverage the capabilities of GPT-4 effectively, you can consider the following approaches:

Please note that the availability and specific details regarding access to GPT-4 may vary, so it’s recommended to refer to official sources or updates from OpenAI for the most accurate and up-to-date information.

1.Getting on the GPT-4 API waitlist

To directly access GPT-4, you can opt to join the API waitlist. Upon gaining access, you will have the opportunity to integrate GPT-4 into your applications and services through the provided API. However, it is important to be aware that access availability is determined on a case-by-case basis and may involve a significant cost.

Here are the steps to join the GPT-4 waitlist:

Step 1: Visit the OpenAI website and navigate to the “Products” section.

Step 2: Select “GPT-4,” which will redirect you to the dedicated GPT-4 page.

Step 3: Click on the option to “Join the API waitlist,” leading you to the GPT-4 API waitlist page.

Step 4: Complete the required information in the provided fields and click the “Join the waitlist” button.

Please note that the rollout of GPT-4 is being conducted gradually by OpenAI, and each application is carefully evaluated, which means it may take some time before you receive access to GPT-4.

2.Subscribing to ChatGPT Plus for access to GPT-4

Another option for utilizing GPT-4 is by subscribing to ChatGPT Plus, which grants access to GPT-4 for various applications like text generation, conversations, and content creation. However, please note that this method requires a subscription fee.

Here are the steps to quickly upgrade to a ChatGPT Plus subscription:

Step 1: Visit the ChatGPT website.

Step 2: Look for the “Upgrade to Plus” option located in the bottom left corner and click on it.

Step 3: A pop-up will appear, providing a comparison between the free and ChatGPT plans.

Step 4: Click on “Upgrade plan” to proceed with the upgrade. You will be redirected to a page where you can enter your credit card details and billing information. Fill in the necessary information and complete the payment.

Step 5: Once the payment is successfully processed, you will have access to both GPT-4 and GPT-3.5 models.

Step 6: Select the GPT-4 model from the drop-down menu on the ChatGPT interface and begin generating content or engaging in conversations.

Additionally, OpenAI will continue to enhance and update the model, so it’s advisable to stay updated with the latest changes.

GPT-4 Performance Benchmarks

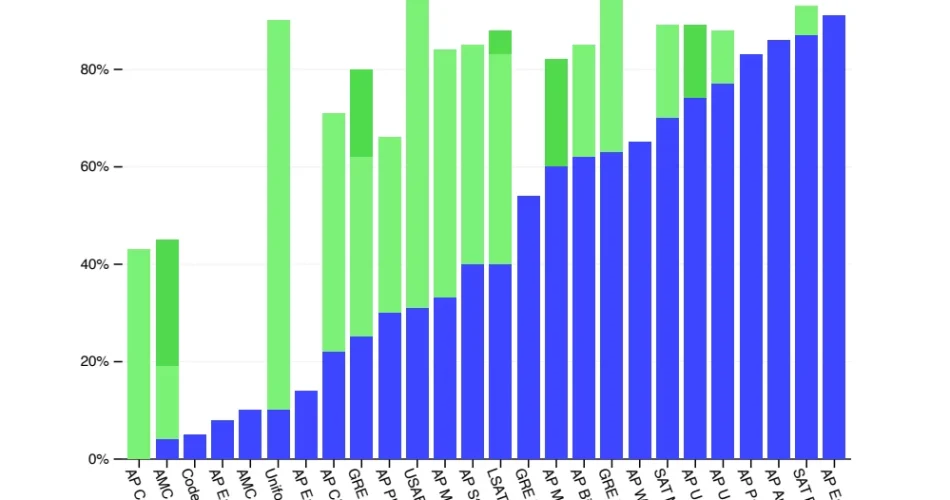

OpenAI conducted an evaluation of GPT-4 by simulating human-designed exams, such as the Uniform Bar Examination for lawyers, LSAT, and SAT exams for university admissions. The results demonstrated that GPT-4 achieved performance comparable to humans on various professional and academic benchmarks.

In addition, OpenAI compared GPT-4 against traditional machine learning models, including 57 multiple-choice questions spanning different subjects, everyday event commonsense reasoning, and elementary science multiple-choice questions. GPT-4 outperformed not only existing large language models but also most cutting-edge models, even those specifically designed or additionally trained for benchmark tests.

To assess GPT-4’s proficiency in handling different languages, OpenAI utilized Azure Translate to translate the MMLU benchmark test, which consists of 14,000 multiple-choice questions across 57 subjects, into various languages. GPT-4 demonstrated superior English handling capabilities compared to GPT-3.5 and other large language models in 24 out of 26 tested languages.

Performance of GPT-3.5 and GPT-4 on various academic exams, from the GPT-4 Technical Report

Limitations of GPT-4

OpenAI explicitly recognizes that while GPT-4 has made significant strides in various areas, it still faces challenges such as “social biases, generating incorrect information (hallucinations), and responding to adversarial prompts.” These challenges are also present in earlier versions of the GPT models.

In simpler terms, GPT-4 is not without flaws. There are areas where it still requires improvement and optimization. However, OpenAI is actively addressing these challenges and has been transparent about their pursuit of solutions. They are conducting extensive research to mitigate social biases within the model, enhance its ability to identify factual information and reduce hallucinations, as well as improve its response to adversarial prompts.

OpenAI’s transparent and candid approach demonstrates their commitment to delivering a high-quality product and ensuring a positive user experience. It also showcases their deep understanding of AI ethics and their responsibility towards society. OpenAI is taking tangible steps to fulfill their promises by continually refining and enhancing GPT-4’s capabilities for the benefit of the public and humanity at large.

The Price of GPT-4

For additional details, please consult the official documentation provided by OpenAI.

| Models | GPT-4 API Basic Version | GPT-4 API Plus Version | ChatGPT Plus |

|---|---|---|---|

| Price | 1.$0.03 / 1K prompt tokens2.$0.06 / 1K completion tokens | 1.$0.06 / 1K prompt tokens2.$0.12 / 1K completion tokens | $20/mo |

| Function | Functional for normal use: make plain text requests (image input is still in limited alpha) | Limited access to 32,768 token context (about 50 pages of text) versions | Limited text input and generation |

GPT-4 History

In January 2023, Sam Altman, the founder of OpenAI, stated that GPT-4 would be unveiled at some point during that year. He clarified that the rumors suggesting GPT-4 was trained with 1 quadrillion parameters were false. This information was revealed in an interview with StrictlyVC.

US Representatives Beyer and Lieu confirmed to The New York Times that Altman visited Congress in January 2023 and showcased GPT-4, highlighting its improved “safety controls” in comparison to other AI models.

On February 8, 2023, Microsoft introduced a new version of Bing, which was based on GPT-4.

During the “AI in Focus” digital kickoff event organized by Microsoft Germany on March 9, 2023, it was announced that GPT-4 would be released the following week. This information was reported by German media, specifically Heise Online.

On March 14, 2023, OpenAI officially announced the release of GPT-4. Contrary to previous rumors, GPT-4 does not include video input functionality, and the exact number of training parameters used was not disclosed.